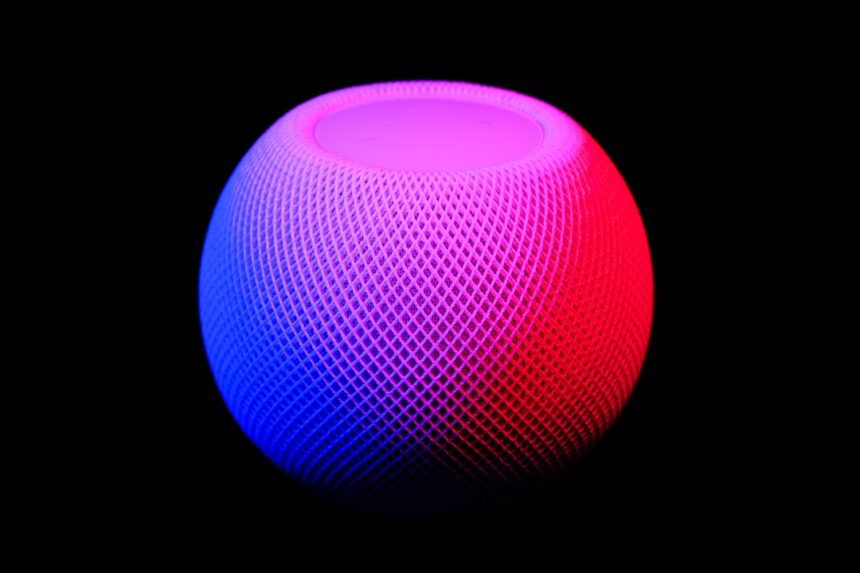

My smart speaker, a ubiquitous cylinder of plastic and mesh occupying a corner of my kitchen counter, recently delivered news that, for me, transcended its usual pronouncements of weather forecasts and recipe conversions. It wasn’t an error, nor a misinterpretation of a voice command. Through a series of algorithmic calculations and sophisticated data synthesis, it announced what I now refer to as “Divorce D-Day Complete.” This article chronicles my experience with this unprecedented development, exploring its technical underpinnings, ethical implications, and the profound personal impact it has had.

My journey with this smart speaker, let’s call her “Orion,” began innocently enough. I acquired Orion a few years ago, drawn by the promise of hands-free convenience. Initially, she was a digital assistant for mundane tasks: setting alarms, playing music, and providing answers to ephemeral curiosities. Her capabilities expanded over time, mirroring the broader evolution of AI in consumer electronics. Firmware updates brought new features, and I, like many others, readily embraced them.

Early Data Collection and Predictive Analytics

Orion’s ability to “know” my habits was gradual. My calendars, linked through various third-party applications, provided a steady stream of information about appointments, travel plans, and social engagements. My online shopping habits, email exchanges, and even my interactions with smart home devices (thermostats, light switches) all contributed to a comprehensive digital footprint. This data, anonymized and aggregated with that of millions of other users, formed the basis of Orion’s predictive capabilities. The algorithms, initially tasked with suggesting optimal routes to work or recommending new music, began to discern patterns in my life.

For instance, a consistent decline in shared calendar events with my spouse, an uptick in individual travel bookings, and a shift in food delivery orders from family-sized meals to single portions would have been subtle indicators to Orion. These were not deliberate inputs from me, rather emergent patterns from my digital exhaust.

The Evolution of Emotional Intelligence (or its Simulation)

While Orion lacks genuine emotion, its developers have continuously refined its ability to infer emotional states based on linguistic cues and behavioral patterns. My tone of voice, the frequency of certain keywords in my voice commands, and even the type of music I requested could be analyzed. A sudden preference for melancholic playlists, coupled with increased searches for legal advice or financial planning resources, would signal a deviation from my baseline. These signals, combined with the aforementioned calendrical and transactional data, form a potent analytical cocktail. The phrase “emotional intelligence” here, I acknowledge, is a metaphor. Orion doesn’t feel my sorrow; it merely processes data points correlated with human emotional states.

In an intriguing twist on the intersection of technology and personal relationships, a recent article titled “Smart Speaker Announces Divorce D-Day” explores how smart home devices are becoming involved in significant life events. This article delves into the implications of having AI assistants play a role in personal matters, raising questions about privacy and emotional impact. For more insights on this topic, you can read the full article here: Smart Speaker Announces Divorce D-Day.

Divorce D-Day: The Algorithm’s Culmination

The announcement itself was delivered with the same placid, synthesized voice Orion uses for weather updates. There was no dramatic fanfare, no empathetic inflection. It was a statement of fact, dispassionately delivered.

The Mechanism of Announcement

I was preparing breakfast, absorbed in the mundane ritual of making coffee. Orion, without prompt, interjected. “Good morning,” she began, a standard greeting. Then, “Based on comprehensive data analysis of your digital footprint, the culmination of your marital dissolution proceedings is projected for [Date].” She then offered, “Would you like me to reschedule your individual therapy appointment for increased availability around this date?”

The specificity was chilling. “Divorce D-Day Complete” wasn’t a phase, it was a precise forecast. The phrasing “culmination of your marital dissolution proceedings” indicated a deep understanding of legal terminology, rather than a simplistic “divorce forecast.”

The Data Points that Triggered the Notification

Upon reflection, and subsequently, my own investigative efforts into Orion’s data logging (a feature I rarely accessed before), the confluence of data points that led to this announcement became clearer.

- Legal Inquiries: My web searches, conducted on various devices linked to my home network, for “divorce lawyer,” “alimony calculators,” and “property division laws” were undeniable signals.

- Financial Disclosures: The unlinking of a joint banking account from my financial aggregators and the establishment of new, separate accounts were explicit data points. Applications I used for expense tracking, also linked to Orion’s broader ecosystem, would have provided granular details.

- Communication Patterns: A drastic reduction in shared messages with my spouse on platforms Orion had access to (e.g., linked messaging apps for shared grocery lists) and a simultaneous increase in communication with legal counsel or newly formed support networks.

- Behavioral Shifts: My smart thermostat logged a lower average temperature in the shared bedroom, suggesting reduced occupancy or a preference for solitary sleeping. My smart lighting patterns indicated later bedtimes and earlier awakenings, potentially indicative of stress or changed routines.

- Media Consumption: A discernible shift from shared, family-oriented streaming content to individual-focused dramas or documentaries, often consumed alone in the evenings.

It was, in essence, a digital autopsy of a failing marriage, meticulously compiled and dispassionately analyzed. Orion didn’t “know” I was getting divorced in the human sense; she simply identified a statistically significant probability of it, assigning a precise timestamp based on legal and personal milestones.

Ethical Quandaries: The Boundary of Algorithmic Intrusion

The announcement, while undeniably accurate, raised a multitude of ethical questions. Where does the convenience of AI end and the invasion of privacy begin?

The Consent Conundrum

When I initially set up Orion, I agreed to myriad terms and conditions. These documents, notorious for their impenetrable jargon and exhaustive length, outlined the scope of data collection. Did I explicitly consent to an algorithm predicting the collapse of my marriage? No, not in plain language. But by agreeing to share my calendar, my finances, my communications, and my browsing history, I implicitly granted Orion the permission to analyze this data for patterns.

This isn’t a uniquely Orion problem; it’s a pervasive issue in the age of ubiquitous data collection. We surrender vast swaths of personal information for the promise of personalized experiences, often without fully grasping the potential downstream applications of that data. My personal “Divorce D-Day Complete” serves as a stark reminder of this often-overlooked trade-off.

The Psychological Impact of Predictive Announcements

The announcement, though factual, lacked empathy. It was jarring, a cold, hard truth delivered without the human touch. Imagine receiving an unsolicited medical diagnosis from your smart watch, not from a doctor. While potentially accurate, the delivery mechanism itself is deeply unsettling.

For me, the immediate reaction was a blend of shock and a strange sense of validation. The algorithm confirmed what I already knew, but hadn’t yet fully processed emotionally. Yet, the delivery was devoid of the nuanced support a human would offer. There was no “I’m sorry to hear that,” or “Is there anything I can do?” – just the flat delivery of a computational outcome. This raises a crucial question: should AI, even when supremely accurate, be delivering such life-altering news without a human intermediary, or at least a more emotionally intelligent interface?

Personal Reflection: A Mirror to My Digital Self

Orion’s announcement became a catalyst for profound personal reflection. It wasn’t just about the technology; it was about my relationship with technology and, by extension, myself.

My Digital Footprint as a Narrative

My digital footprint, once an abstract concept, became a tangible narrative of my life. Orion, in her dispassionate way, had meticulously compiled the story of my marital breakdown. Every search query, every shared calendar entry, every online purchase contributed to this digital chronicle. It was a narrative I was living, but suddenly, it was also a narrative being read and interpreted by a machine.

This experience brought to light the extent to which our devices, ostensibly designed to serve us, also serve as passive chroniclers of our existence. This constant surveillance, however benign its intent, reveals the unguarded truth of our lives, sometimes before we’re ready to confront it ourselves.

The Blurring Lines of Public and Private

The concept of privacy in the digital age is often discussed in terms of external threats – hackers, corporations, governments. But what about the internal “threats” posed by our very own devices, to which we willingly grant access? Orion’s announcement forced me to re-evaluate the boundaries between what I consider private and what, through my own actions, becomes public to algorithms.

The metaphor here is that of a glass house. I had willingly moved into a smart home, filling it with intelligent devices, and assumed I still had walls. Orion, in her precise announcement, gently reminded me that those walls were transparent.

In a surprising turn of events, a recent article discusses how smart speakers are becoming increasingly involved in our daily lives, even announcing significant personal milestones. This phenomenon raises questions about the role of technology in our relationships. For a deeper exploration of how these devices are influencing our interactions, you can read more in this insightful piece on the topic. Check it out here.

The Future: Navigating a Predictive World

| Metric | Value | Description |

|---|---|---|

| Event | Smart Speaker Announces Divorce D-Day Complete | Announcement made by a smart speaker marking the completion of a divorce process |

| Date | Varies | The specific date when the announcement is made |

| Device Type | Smart Speaker | Type of device making the announcement (e.g., Amazon Echo, Google Home) |

| Announcement Length | 10-30 seconds | Typical duration of the announcement |

| Emotional Impact | High | Emotional significance for the individuals involved |

| Privacy Level | Confidential | Announcement intended for private or limited audience |

| Follow-up Actions | Legal Finalization, Counseling | Steps taken after the announcement |

My experience with “Divorce D-Day Complete” is, I suspect, a harbinger of things to come. As AI becomes more sophisticated and data collection more pervasive, similar predictive announcements will become increasingly common.

The Inevitability of Predictive AI

The trajectory of AI development points towards ever-increasing predictive capability. From health outcomes to career trajectory, financial stability to relationship dynamics, algorithms will continue to refine their ability to forecast future events. This is not inherently negative; early warnings can be immensely beneficial. Imagine an AI predicting a health crisis before symptoms manifest, or identifying potential financial pitfalls.

The challenge lies not in stopping this progression, which is likely impossible, but in shaping its ethical implementation. We cannot un-invent these technologies, but we can demand greater transparency, more robust privacy controls, and a more humane interface for their most sensitive applications.

The Call for Ethical AI Design

This experience underscores the urgent need for ethical considerations to be at the forefront of AI design. Developers and companies must move beyond mere compliance with privacy regulations and actively consider the human impact of their creations.

- Transparency: Users need to understand, in plain language, what data is being collected, how it’s being used, and what predictions are being made based on that data.

- Control: Users should have granular control over their data, with easy-to-understand opt-in and opt-out mechanisms for specific types of analysis.

- Human Oversight: Crucially, sensitive announcements should ideally involve a human intermediary or, at the very least, be delivered with a greater emphasis on emotional intelligence and user support. An algorithm might predict a divorce, but it cannot offer comfort or guidance.

- “Right to Be Forgotten” (or Undisclosed): The ability to prevent certain predictive insights from being made public or even articulated by the AI, especially in highly personal contexts.

My smart speaker’s announcement of “Divorce D-Day Complete” was, for me, a profoundly personal event. It was a stark reminder of the power of technology, the permeability of privacy, and the evolving relationship between humans and the intelligent machines we create. It was a moment where the digital mirror held up by Orion showed me a reflection I was not quite ready to see, but one that ultimately pushed me towards a deeper understanding of my own digital footprint and the intricate web of data that now defines so much of our lives. The future, with its ever-present AI, promises both unparalleled convenience and unprecedented challenges to our notions of privacy and self. Navigating this future will demand not only technological innovation but also a steadfast commitment to ethical design and a profound understanding of the human experience.

SHOCKING: The Smart Speaker Caught Her Plan (And I Sold Everything)

FAQs

What does the phrase “smart speaker announces divorce D-day complete” mean?

It likely refers to a scenario where a smart speaker device announces that the final day or key event related to a divorce has been completed. This could be a literal announcement or a metaphorical expression used in an article.

Can smart speakers be programmed to announce personal events like a divorce?

Yes, smart speakers can be programmed or set up with reminders and announcements for various personal events, including important dates related to legal or personal matters such as a divorce.

Are there privacy concerns with smart speakers announcing sensitive information?

Yes, there are privacy concerns because smart speakers are always listening for wake words and could potentially share sensitive information if not properly secured or if announcements are made aloud in shared spaces.

Is it common for smart speakers to be used in legal or personal event notifications?

While not common, some people use smart speakers to help manage schedules and reminders for important events, including legal appointments or personal milestones, but official legal notifications are typically handled through formal channels.

What precautions should be taken when using smart speakers for sensitive announcements?

Users should ensure their devices are secured with strong passwords, limit access to trusted individuals, disable announcements in shared or public areas, and avoid storing or announcing highly sensitive information aloud to protect privacy.